The role of AI in inclusive recruitment

How can you use AI effectively in recruitment? Find out more in this blog.

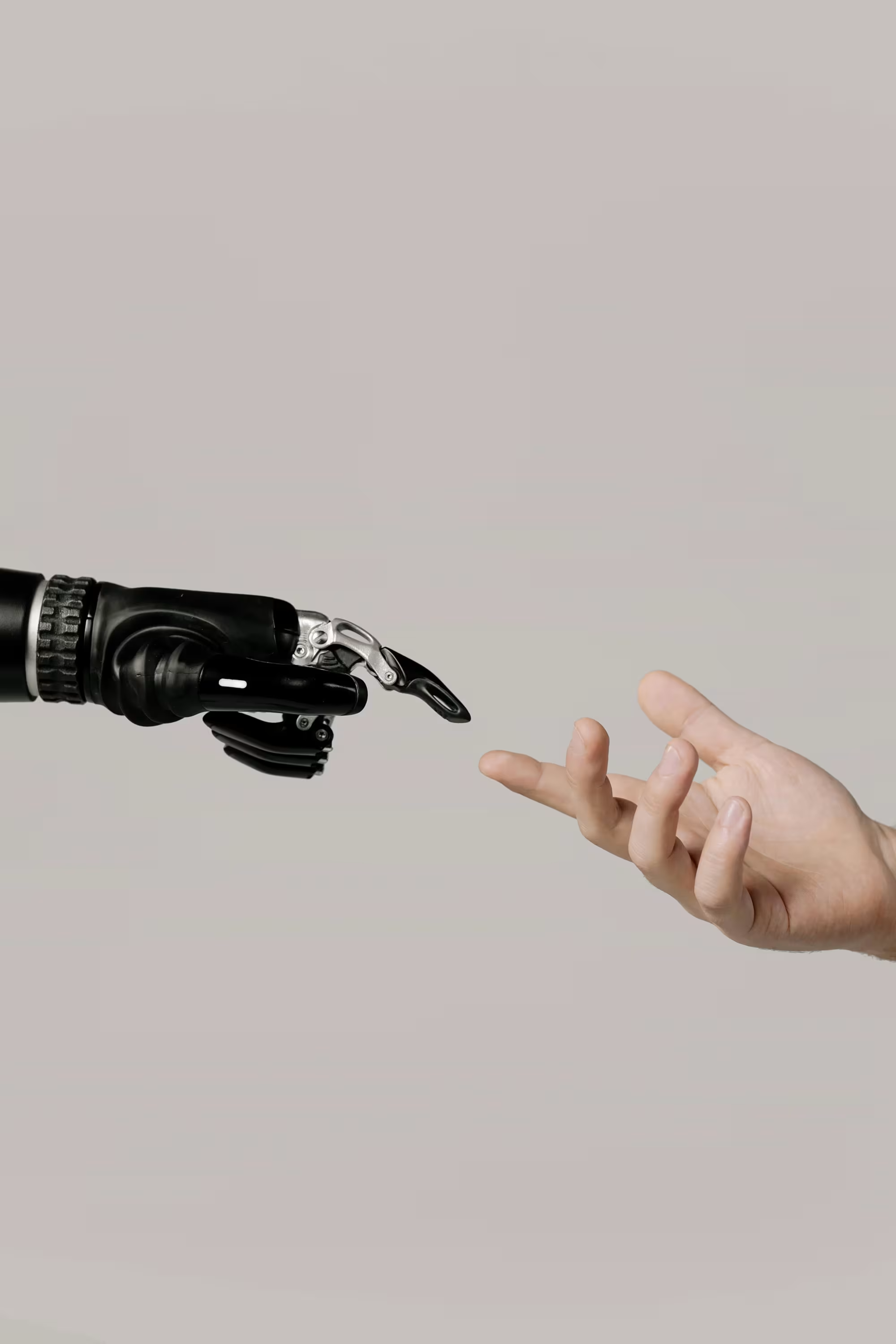

Artificial Intelligence (AI), such as ChatGPT, is radically transforming the workplace. When used strategically in recruitment, AI can help promote diversity and inclusion (D&I). However, there are a number of challenges to consider. You can read more about this in this blog.

What is AI, and why is it used in recruitment?

AI is a technology that mimics human intelligence. It learns, thinks, and can even correct itself. With a simple text instruction (a prompt), you can instruct a generative AI to generate text or an image. AI in recruitment helps you create objective and compelling job postings, eliminate biases, and suggest improvements. Additionally, AI can help maintain a consistent tone of voice, allowing you to quickly draft a job posting that aligns with your brand and attracts the best candidates.

Benefits of AI for Diversity and Inclusion in Recruitment

A diverse team draws on a broader range of complementary talents. To achieve this, it helps to recruit objectively, but this can be difficult due to unconscious biases that creep into the process. As a result, you may miss out on candidates who would enrich the team precisely because they bring a different perspective. AI can help by making the selection process fairer and more objective, ensuring you don’t miss out on talent.

An objective selection process

For example, use AI to create candidate profiles based on skills and experience, and match them to the job requirements. Suppose you have a job opening for a software developer. AI can automatically analyze resumes and compare technical skills—such as programming knowledge and experience with tools—to the job requirements. In addition, AI helps minimize personal biases, such as a preference for applicants you’ve worked with in the past.

For example, AI can promote objectivity by evaluating candidates based on measurable criteria, such as skills and experience, rather than personal details like educational background.

Suppose you unconsciously favor candidates from your alma mater, thereby giving other qualified applicants a lower chance of success. Such bias can result in certain candidates receiving preferential treatment unfairly. AI prevents this by analyzing only relevant qualifications, ensuring that every candidate is evaluated in the same objective manner. This creates a fairer selection process, in which you assess candidates based on their competencies rather than a personal “click” with the selection committee.

Identifying underrepresented groups

AI can help identify underrepresented groups within your organization. For example, some AI-based solutions can analyze basic workforce data and then expand the analysis by creating questionnaires to assess other factors such as introversion, disabilities, creativity, ethnic background, and interests. The results can be used to determine what needs to be done to optimize your team with AI as a strategic partner. These are two examples of how AI can streamline recruitment tasks.

AI bias? How is that possible?

Keep in mind, however, that existing biases may be reinforced by the way an AI model is trained. This training requires billions of data points that the model uses to generate its output. By definition, this data is historical and often contains outdated concepts. For example, if all the CEOs in this training data are men with American names, the model does not know that a woman or someone with a different-sounding name could also be eligible for this position.

Many large companies have already encountered bias, which has not been good for their reputation. For example, Amazon’s AI did not use gender-neutral language when reviewing resumes, resulting in more male than female candidates being automatically selected. Midjourney, an AI that generates photorealistic images, discriminated in the images it created based on both age and gender. Older men were depicted in leadership roles, while older women were absent from work environments. It seemed as though older women were becoming invisible in the workplace, while older men were advancing their careers. Incidentally, Google’s AI made the exact same mistake by preferentially showing the highest-paying online job listings to men.

Eliminating AI and human bias

Biases in recruitment processes are not only counterproductive but also unethical. Therefore, adhere to ethical guidelines to avoid any appearance of discrimination. This is an important responsibility for HR.

Fortunately, there are ways to minimize bias in AI as much as possible. Transparency in the use of AI is of the utmost importance in this regard: it is important to know which programs use AI, how an AI model is trained, and how an AI model makes decisions. A good example is Textmetrics, a Netherlands-based company that provides AI-driven software with bias detection and correction suggestions.

Promoting D&I with AI

AI is a powerful ally in the pursuit of greater diversity and inclusion in recruitment. Be mindful of how you use data, ensure transparency, and avoid falling into the trap of bias. When used responsibly, AI is a powerful tool for promoting diversity within your organization.

.avif)